Making a website AI friendly means making every important fact, action, policy, comparison, and trust signal easy for AI systems to find, extract, verify, and reuse. It is part technical SEO, part accessibility, part content design, and part data governance.

The website is no longer only a screen for human visitors. It is also a source that AI search engines, crawlers, retrieval systems, and agents may inspect before they summarize, cite, recommend, or act. That is why an AI-friendly website supports AI search visibility, generative engine optimization, and the broader shift from traditional SEO to AI SEO.

The short version is simple: put the important information in clean HTML, structure it with meaningful elements, reduce code that hides or delays content, make your claims verifiable, and give AI users a clean way to pass the article into an assistant.

Orbit Media’s AI-readiness checklist frames the problem around “AI visitors” that may miss vague messaging, image-only proof, video-only claims, JavaScript-loaded content, and incomplete forms. A separate web.dev resource on AI agents explains why agents rely on screenshots, raw HTML, and the accessibility tree when they interpret a site. Together, those two ideas create the operating model: humans need a good experience, while AI systems need a faithful machine-readable representation.

This guide goes deeper into the implementation layer that most checklists skip: markdown negotiation points, page-level declarations, “Summarize This Post For LLMs” buttons, semantic HTML, CSS and JavaScript cleanup, and a workbook you can copy into Google Sheets or download as an XLSX file.

What Does AI-Friendly Website Mean?

An AI-friendly website is a site whose content, structure, evidence, and actions can be understood by humans, search engines, answer engines, and AI agents without guessing.

That does not mean writing for robots instead of people. Talia Wolf’s advice in Orbit’s expert commentary is the right guardrail: useful human-first messaging still has to lead, while AI-friendly detail should support it instead of turning the page into keyword stuffing.

AI friendliness has five layers:

| Layer | What It Means | Why It Matters |

|---|---|---|

| Access | Crawlers and agents can reach the important pages | Blocked or hidden content cannot support an answer |

| Structure | The DOM, headings, landmarks, tables, and lists describe the page accurately | AI systems can extract relationships with less ambiguity |

| Content | The page states facts, claims, policies, comparisons, and context in text | Models can summarize and verify the page instead of inferring from visuals |

| Evidence | Author, review, date, source, schema, and external proof signals are present | AI systems need confidence before citing or recommending |

| Action | Forms, buttons, policies, and agent constraints are explicit | Agents can complete tasks with fewer unsafe assumptions |

If a page works only after a visitor hovers, clicks through a carousel, opens a modal, watches a video, or waits for client-side JavaScript to inject the real content, it is fragile for AI systems.

How Do AI Agents Actually Read A Website?

AI agents read a website through a mix of rendered screenshots, raw HTML, and the accessibility tree. The web.dev AI agents guidance describes these as three primary views of a site: visual snapshots, DOM structure, and a browser-native accessibility summary.

Each view has strengths and weaknesses.

Screenshots help an agent understand visual grouping, relative importance, and caution signals. A large destructive button should look different from a small help link. But screenshots are expensive and can be misread when layouts shift, overlays cover elements, or important text is too small.

HTML gives the agent the document structure. If a “Buy Now” button lives inside a product card, the DOM helps the agent associate that action with the correct product. If the page uses generic divs for everything, the agent has to infer intent from classes, text, and position.

The accessibility tree gives the agent a cleaner map of roles, names, and states. It is the same foundation assistive technologies use. If a button has a proper accessible name, a form label connects to an input, and a menu exposes its state, an agent can understand the interface more reliably.

That is why AI-friendly web design overlaps with accessibility. Christopher Penn’s expert note in the Orbit article makes that point plainly: a site that is hard for screen readers is also harder for AI systems and traditional search engines.

What Should You Declare In The Page Header For LLMs?

Declare enough metadata in the document head to help machines identify the page, its canonical URL, its format, its authorship, and the best machine-readable version of the content.

There is no universal <meta name="llm"> standard that all AI systems promise to use. Treat header declarations as helpful affordances, not magic ranking tags. The goal is to make the page easier to parse, easier to attribute, and easier to convert into a clean assistant-ready format.

A practical pattern looks like this:

<link

rel="canonical"

href="https://example.com/how-to-make-a-website-ai-friendly/"

/>

<meta

name="content-format"

content="html"

/>

<meta

name="llm-preferred-format"

content="markdown"

/>

<meta

name="llm-summary"

content="A practical guide to making websites easier for AI systems and agents to parse, verify, and act on."

/>

<link

rel="alternate"

type="text/markdown"

href="https://example.com/how-to-make-a-website-ai-friendly/?format=markdown"

/>

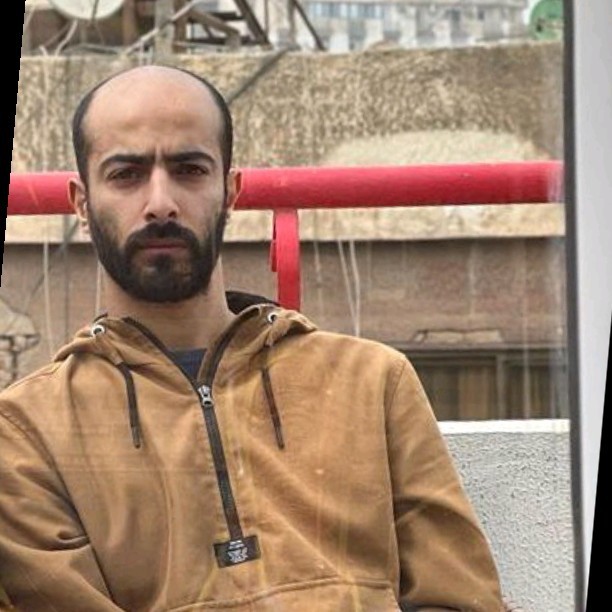

<meta

name="author"

content="Mohamed Diab"

/>

<meta

name="datePublished"

content="2026-05-05T22:27:00+03:00"

/>

<meta

name="dateModified"

content="2026-05-05T22:27:00+03:00"

/>The llm-preferred-format name is not a formal web standard. It is a negotiation point. You are telling downstream tools, browser extensions, internal assistants, and future agents that markdown is the cleaner reuse format.

The stronger declaration is the alternate markdown link. If your site can generate a markdown version of the article, expose it with rel="alternate" and a correct type. If you cannot generate a separate URL, a copy button can still create the same practical outcome for users.

What Are Negotiation Points For AI Agents?

Negotiation points are explicit statements that tell an AI agent what formats, facts, policies, constraints, and actions your site supports.

Most websites assume the visitor is human. A human can interpret nuance, call sales, read a tooltip, or abandon a task when something feels unclear. Agents need more explicit boundaries.

Useful negotiation points include:

| Negotiation Point | Example Declaration | Where To Put It |

|---|---|---|

| Preferred content format | ”Use the markdown version for summarization and attribution.” | Head metadata, copy button, article footer |

| Canonical source | ”Canonical URL is the source of record.” | Canonical tag, markdown export |

| Action boundaries | ”Agents may collect public pricing, but purchases require user confirmation.” | Policy page, checkout flow |

| Data freshness | ”Inventory updates every 15 minutes.” | Product pages, feed docs |

| Comparison constraints | ”This service is best for SaaS and B2B teams, not local restaurants.” | Service pages, comparison pages |

| Contact context | ”Ask leads which AI tool or prompt referred them.” | Forms, CRM fields |

| Support scope | ”Cancellation requires account-owner approval.” | Terms, support pages |

These declarations are especially important for agentic search and agentic AI protocols, where the system may move from reading a page to checking a policy, filling a form, comparing options, or initiating a purchase.

The SEO lesson is not “add a new tag and relax.” It is “make the rules machine-readable before an agent needs to guess.”

Should You Add A Summarize This Post For LLMs Button?

Yes, add a “Summarize This Post For LLMs” or “Copy Markdown For LLMs” button near the article’s share controls when the content is meant to be reused in research, strategy, sales enablement, or AI workflows.

This site already uses that pattern. The button beside the share icons copies a clean markdown payload with the title, description, canonical URL, author, reviewers, dates, source URLs, image metadata, and article body. That is more useful than copying the rendered page because it removes navigation, sidebar, styling, and unrelated interface text.

For AI-friendly publishing, the button should copy:

- Title and description.

- Canonical URL.

- Publisher and author.

- Published and updated dates.

- Reviewer and editor names when available.

- External source URLs cited by the article.

- Featured image metadata.

- The article body in clean markdown.

- A usage note asking assistants to preserve attribution.

This is not only for AI crawlers. It helps human readers who want to summarize, compare, translate, or brief a team using ChatGPT, Gemini, Claude, Perplexity, or another assistant.

The key is placement. Put the button near familiar sharing controls, not at the very bottom where users miss it. If you treat “copy for LLMs” as a share action, users understand the purpose immediately.

How Should You Use Semantic HTML For AI Readiness?

Use semantic HTML to reduce ambiguity. AI systems can parse generic divs, but meaningful elements give them a cleaner map of the page.

Start with the page skeleton:

<header>

<nav aria-label="Primary navigation">...</nav>

</header>

<main>

<article>

<header>

<h1>How To Make A Website AI Friendly</h1>

</header>

<section aria-labelledby="semantic-html">

<h2 id="semantic-html">How Should You Use Semantic HTML?</h2>

...

</section>

</article>

</main>

<footer>...</footer>Then make the content itself semantic:

| Content Type | Better Markup | Avoid |

|---|---|---|

| Questions | H2 or H3 headings | Styled paragraph labels |

| Steps | Ordered lists | Line breaks inside one paragraph |

| Feature comparisons | Tables | Screenshot-only comparison grids |

| Warnings | Aside or callout with text | Text embedded in an image |

| Quotes | Blockquote with attribution | Decorative quote image |

| Buttons | Button elements | Divs with click handlers |

| Links | Anchor elements with href | JavaScript-only click regions |

| Forms | Label, input, select, textarea | Placeholder-only labels |

The web.dev guidance is direct here: prefer real button and anchor elements, connect labels to inputs, expose roles and states, and keep required interactive elements visible and stable.

Semantic HTML also improves technical SEO audits because crawlers, accessibility tools, validators, and QA scripts can inspect the same structure.

How Do You Stop Images And Videos From Hiding Important Facts?

Repeat important image and video information in crawlable text. AI systems may process images and video in some environments, but you should not make critical claims depend on that.

Orbit’s checklist highlights a common problem: companies put certifications, badges, trust seals, product claims, and differentiators inside images. Humans can see them. A basic crawler may only see an empty image tag or vague alt text.

Use this rule: if the fact would affect trust, qualification, pricing, eligibility, compliance, or conversion, it needs to exist as text.

That means:

- Add descriptive captions under charts and diagrams.

- Include transcripts for videos.

- Summarize the key points from embedded webinars.

- Write certification and award claims in body copy.

- Put comparison data in HTML tables, not only graphic layouts.

- Use alt text for image meaning, but do not rely on alt text as the only location for critical copy.

This is also useful for AI Overview optimization because source-worthy passages often need concise, extractable wording.

Why Should You Strip Unnecessary CSS, JavaScript, And CMS Bloat?

Strip unnecessary CSS, JavaScript, and CMS bloat because AI systems need the page content and action structure, not the weight of every theme, plugin, builder, tracking snippet, and animation library.

CMSs often create messy source code by default. Page builders add nested wrappers. Plugins enqueue CSS and JavaScript sitewide even when one page does not need them. Themes ship icon fonts, sliders, animation code, shortcodes, duplicate schema, and inline styles that survive long after the design has changed.

That bloat creates four AI-readiness problems:

| Bloat Type | AI-Friendly Risk | Fix |

|---|---|---|

| Client-rendered core content | Crawlers may miss content that appears only after JavaScript runs | Render essential content server-side or statically |

| Hidden duplicate markup | Models may extract stale or conflicting content | Remove unused blocks and old page-builder residue |

| Heavy CSS and layout shifts | Screenshot-based agents may misread unstable layouts | Reduce unused CSS and reserve stable space |

| Excess scripts | Agents and crawlers may face slower, noisier, more fragile pages | Defer nonessential scripts and remove unused plugin assets |

Kevin Indig’s commentary in the Orbit source is especially relevant here. His point is that many LLM crawlers rely on initial HTML, so essential content loaded only through client-side JavaScript can be missed.

You do not need to make every page plain. You need to keep the source of truth visible without requiring a fragile interaction.

How Do CMS Teams Audit AI-Unfriendly Code?

Audit AI-unfriendly code by comparing what humans see, what the browser source contains, what a crawler sees, and what the accessibility tree exposes.

Use this four-view test:

| View | Question | Tooling |

|---|---|---|

| Browser view | Can a human understand the page quickly? | Manual QA |

| View source | Does the initial HTML contain the core answer? | Browser source, curl |

| Rendered DOM | Does JavaScript change, duplicate, or hide important content? | DevTools, Screaming Frog rendering |

| Accessibility tree | Are controls, labels, regions, and states understandable? | Chrome DevTools accessibility panel |

Pay close attention to WordPress and other CMS patterns:

- Accordion content that does not exist in initial HTML.

- Tabs that load content only after interaction.

- Product specs stored as image blocks.

- Empty buttons with icon-only labels.

- Forms that depend on placeholders instead of labels.

- Multiple Organization schema blocks from competing plugins.

- Old shortcodes or builder markup inside the page source.

- Sitewide scripts loaded on every article.

This work sits naturally inside technical SEO and SEO content because the implementation and editorial layers need to agree.

How Do You Make Brand Claims Verifiable For AI Systems?

Make brand claims verifiable by connecting page copy to proof that exists on your site and across the wider web.

Liza Adams’ expert commentary in the Orbit source is a useful reminder: AI recommendations do not depend on your website alone. AI systems can form impressions from publications, comments, reviews, employee feedback, directories, and other sources.

That is why an AI-friendly website should not make unsupported claims such as “best agency,” “trusted by leading brands,” or “industry-leading platform” without evidence nearby.

Better patterns include:

| Weak Claim | AI-Friendly Rewrite |

|---|---|

| ”We are the best SEO agency." | "We specialize in technical SEO, AI SEO, and content systems for SaaS, ecommerce, and publishing teams." |

| "Trusted by top brands." | "Client work includes ecommerce, SaaS, and publishing projects; see case studies and reviewed testimonials." |

| "Fast implementation." | "Most technical audit fixes are prioritized into 30-day, 60-day, and engineering-backlog groups." |

| "AI-ready content." | "Every article includes author metadata, reviewer metadata, source URLs, markdown export, and structured headings.” |

The more specific claim gives AI systems a cleaner entity profile. It also gives users a better reason to trust the page.

For deeper entity work, connect this with entity SEO and LLM seeding, because off-site corroboration is often what turns a claim into usable evidence.

How Should Forms Change For AI And Agentic Visitors?

Forms should include AI as a discovery source, preserve clear labels, and make action consequences explicit.

Orbit’s article gives a practical example from Wil Reynolds: when a form lets users choose ChatGPT as a source, the business can ask what prompt path brought them there. That turns AI-driven demand into research data.

At minimum, review these form elements:

| Form Element | AI-Friendly Improvement |

|---|---|

| ”How did you find us?” | Add options for ChatGPT, Gemini, Perplexity, Claude, Google AI Overviews, and Other AI tool |

| Prompt path | Add an optional field asking what the user asked the AI system |

| Labels | Connect each label to the correct input |

| Required fields | Mark required fields visibly and programmatically |

| Submit button | Use a real button with a clear action label |

| Confirmation | Explain what happens next, who responds, and when |

| Privacy note | State how prompt data and contact data will be used |

For agentic visitors, the next layer is consent. If an agent fills a form, books a call, starts checkout, or requests a quote, the page should make the boundary visible before submission.

What Is The AI-Friendly Page Template?

An AI-friendly page template gives humans a strong experience while exposing clean, structured, machine-readable content.

Use this model for articles, service pages, product pages, and comparison pages:

- Clear H1 that names the topic, product, service, or question.

- Short direct answer or summary near the top.

- Author, reviewer, editor, and updated date where relevant.

- Canonical URL and structured article or page schema.

- Copy markdown or summarize-for-LLMs button near share controls.

- Semantic headings that answer real user questions.

- Tables for specs, comparisons, pricing, or criteria.

- Lists for steps, requirements, and checklists.

- Text alternatives for visual and video information.

- Explicit fit, non-fit, policies, and constraints.

- Internal links to related context.

- External sources for claims that need support.

- Clean HTML that works with JavaScript disabled for core content.

This template supports both answer engine optimization and classic SEO. It also gives future protocol layers a cleaner foundation, whether the site later exposes structured data through APIs, feeds, MCP servers, or browser-native agent tools.

What Should You Measure After The Changes?

Measure AI readiness through visibility, extraction quality, crawl quality, and lead quality.

Start with these checks:

| Measurement Area | What To Track |

|---|---|

| AI answer visibility | Whether the brand or page appears in ChatGPT, Gemini, Perplexity, AI Overviews, and AI Mode prompts |

| Citation visibility | Which pages get cited, and which competitors are cited instead |

| Extraction quality | Whether assistants summarize the page accurately from copied markdown |

| Crawler access | Server log visits from known search and AI user agents |

| Rendering gaps | Differences between initial HTML and rendered DOM |

| Accessibility tree | Missing labels, roles, names, and states |

| AI referrals | GA4 referrers from known AI web surfaces |

| Dark AI demand | Leads who self-report AI tools or prompt paths |

Do not wait for perfect AI attribution. The better move is to combine prompt tracking, analytics, server logs, CRM fields, and manual answer reviews.

That is the same measurement logic behind prompt research for AI SEO: prompts reveal how buyers ask, sources reveal what systems trust, and your website updates close the gaps.

What Is The Practical AI-Friendly Website Checklist?

The practical checklist is not one flat list. It should show the area, the implementation detail, the owner, the evidence to collect, the priority, and the status. That turns AI readiness from advice into an operating sheet.

Use the workbook below with your SEO, content, design, analytics, and engineering teams. Copy it to Google Sheets for collaboration or download the XLSX file for an audit package.

AI-Friendly Website Implementation Workbook

Use this as a working audit sheet. It includes the checklist item, implementation detail, owner, evidence to collect, and priority.

| Area | Checklist Item | Implementation Detail | Owner | Evidence To Collect | Priority | Status | Notes |

|---|---|---|---|---|---|---|---|

| Discovery | Robots and crawler access | Confirm public pages, assets, and AI-relevant paths are not blocked accidentally. | Technical SEO | robots.txt, server logs, crawl test | High | Not started | |

| Discovery | XML sitemap coverage | List canonical pages that answer product, service, policy, comparison, and support questions. | SEO | Sitemap export, index coverage | High | Not started | |

| Discovery | Important content in initial HTML | Make core copy, specs, prices, policies, and answers visible without client-side rendering. | Engineering | Rendered HTML diff, crawler snapshot | High | Not started | |

| Structure | Semantic landmarks | Use header, nav, main, article, section, aside, and footer for predictable page regions. | Frontend | DOM inspection, accessibility tree | High | Not started | |

| Structure | Heading hierarchy | Use one H1, descriptive H2s, and nested H3s that map to real subquestions. | Content | Outline review, page source | High | Not started | |

| Structure | Tables for comparisons | Put specs, pricing, feature comparisons, and evaluation criteria in real table markup. | Content | Table audit, schema review | Medium | Not started | |

| Structure | Lists for steps and criteria | Use ol and ul markup for processes, requirements, pros, cons, and eligibility rules. | Content | DOM inspection | Medium | Not started | |

| Accessibility | Accessible names for controls | Give buttons, inputs, toggles, and menus clear labels, states, and roles. | Frontend | Accessibility tree, Lighthouse | High | Not started | |

| Accessibility | Label/input pairing | Connect labels with inputs using for and id attributes or accessible equivalents. | Frontend | Form audit | High | Not started | |

| Accessibility | Stable action areas | Make required buttons and links visible, large enough, and stable across responsive states. | Design | Mobile and desktop QA | Medium | Not started | |

| Content | Entity clarity | State what the brand does, who it serves, where it operates, and what proof supports it. | Content | Homepage and service page review | High | Not started | |

| Content | Negotiation points for agents | Declare acceptable formats, constraints, policies, prices, and action boundaries in crawlable content. | Product/SEO | Policy page, service page, markdown block | High | Not started | |

| Content | LLM summary block | Add a concise machine-readable summary that captures the page claim, audience, key facts, and source context. | Content | Markdown summary, header metadata | Medium | Not started | |

| Content | Media text backup | Repeat important claims from images, charts, and videos in body text, captions, or transcripts. | Content | Image/video audit | High | Not started | |

| Content | Comparison clarity | Explain when your product or service is a fit, when it is not, and how it compares to alternatives. | Content | Comparison page, FAQ | Medium | Not started | |

| Performance | CSS bloat reduction | Remove unused styles, large theme payloads, hidden page-builder output, and duplicated design-system CSS. | Engineering | Coverage report, bundle analyzer | Medium | Not started | |

| Performance | JavaScript bloat reduction | Ship less client JavaScript, defer nonessential scripts, and keep content independent from interactions. | Engineering | Bundle report, no-JS test | High | Not started | |

| Performance | CMS output cleanup | Audit page-builder wrappers, shortcode residue, plugin assets, inline styles, and duplicate schema. | Engineering/SEO | Source crawl, template audit | Medium | Not started | |

| Trust | Author and reviewer signals | Show real authors, reviewers, bios, credentials, dates, and editorial responsibility. | Editorial | Byline schema, author pages | Medium | Not started | |

| Trust | External corroboration | Align website claims with reviews, directories, social profiles, documentation, and third-party mentions. | Brand/PR | Entity consistency sheet | High | Not started | |

| Agent UX | Copy markdown for LLMs | Offer a clean markdown export near share controls so users can pass the page to an assistant with attribution. | Frontend/Content | Button test, clipboard payload | Medium | Not started | |

| Agent UX | Action boundaries | Make forms, checkout, booking, returns, cancellation, and support policies explicit before an agent acts. | Product | Policy pages, form audit | High | Not started | |

| Measurement | AI referral tracking | Create GA4 explorations for AI referrers and separate known chatbot domains from organic search. | Analytics | GA4 exploration, channel grouping | Medium | Not started | |

| Measurement | Agent log monitoring | Track known AI user agents and virtual browser visits in server logs outside GA4. | Engineering/Analytics | Log sample, bot filter | Medium | Not started | |

| Measurement | Prompt feedback capture | Ask leads which AI tool and prompt path brought them to the site when appropriate. | Demand Gen | Form field, CRM property | Low | Not started |