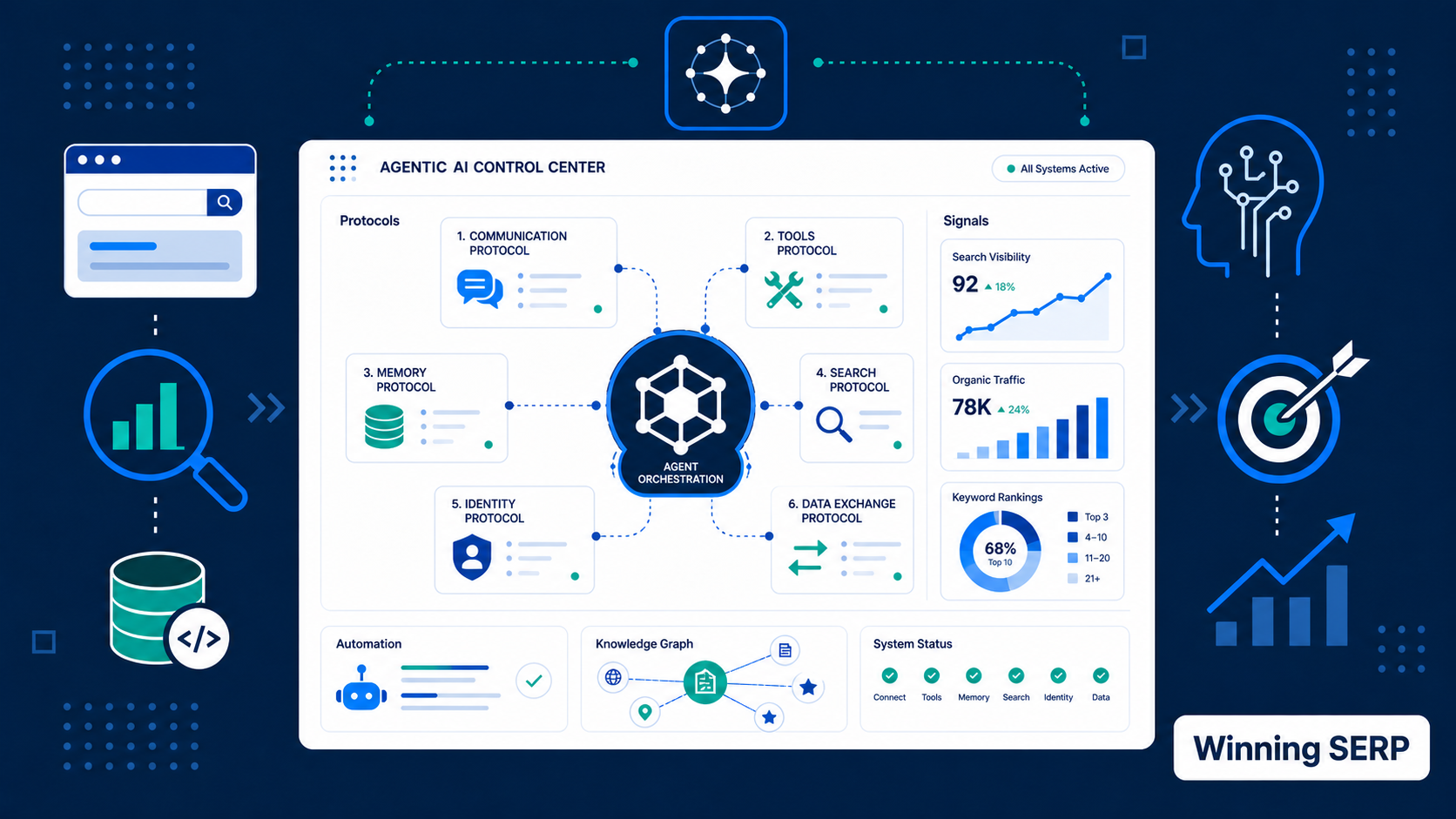

Agentic AI protocols are the technical rules that help AI agents discover capabilities, retrieve trusted data, call tools, coordinate with other agents, and complete actions for users. For SEO teams, they matter because agentic search is moving from “find an answer” toward “complete a task.”

That shift changes the job. A page still needs to rank, load, answer the query, and earn trust. But an AI agent may also need to check inventory, compare policies, confirm pricing, validate a brand claim, open a checkout path, or hand a task to another specialist agent.

Protocols give that agent a more reliable way to interact with the web than scraping pages, guessing from screenshots, or clicking through brittle interfaces. They do not replace AI SEO, structured data, crawlability, content quality, or brand authority. They add another layer on top of them.

What Are Agentic AI Protocols?

Agentic AI protocols are standards and specifications that define how AI agents communicate with tools, websites, other agents, and commerce systems.

The simplest way to understand them is to separate intent from action. A user gives intent in natural language. The agent turns that intent into smaller tasks. Protocols help the agent complete those tasks without inventing a custom integration every time.

For example, a user might ask an assistant to “find a durable office chair under $400, compare return policies, and order the best option.” A basic answer engine can summarize buying advice. An agentic system may need product data, reviews, prices, shipping rules, merchant capabilities, payment authorization, and confirmation steps.

That workflow touches several layers:

| Layer | What the Agent Needs | Protocol Examples |

|---|---|---|

| Tool and data access | Connect to APIs, databases, files, tools, and business systems | MCP |

| Agent coordination | Let one agent discover and collaborate with another agent | A2A |

| Website interaction | Make site content and actions queryable or callable | NLWeb, WebMCP |

| Commerce | Let agents discover products, negotiate checkout, and complete purchases | ACP, UCP, AP2 |

| SEO foundations | Make facts crawlable, structured, consistent, and verifiable | HTML, Schema.org, feeds, sitemaps, APIs |

The SEO lesson is straightforward: protocols work best when your underlying information is already clean. If your product details, location data, pricing, policies, reviews, and service descriptions are inconsistent, protocol adoption can expose the mess faster.

Why Should SEOs Care About Protocols?

SEOs should care because protocols influence whether AI agents can understand and act on a brand’s information reliably.

Classic SEO asks whether a page can be crawled, indexed, ranked, and clicked. AI search asks whether the page can be retrieved, summarized, cited, and compared. Agentic SEO adds another question: can an agent safely use this information to move the user closer to an outcome?

That outcome might be commercial, but it does not have to be. Agents may:

- Compare software vendors.

- Find a clinic appointment.

- Build a travel itinerary.

- Pull documentation into a coding workflow.

- Check whether a brand claim appears on trusted sources.

- Collect product specs for a buying recommendation.

- Ask another agent to complete a specialized step.

This is why AI source coverage becomes more important. Agents often need corroboration before they recommend a brand. They may inspect owned content, third-party pages, reviews, documentation, public profiles, and machine-readable feeds.

Protocols reduce friction, but they do not create trust by themselves. A brand with crawlable facts, clear entities, strong reviews, useful documentation, and consistent third-party mentions gives agents more confidence. A brand with vague marketing copy and conflicting profiles gives agents more reasons to hedge.

Which Protocols Matter Most for SEO?

The most important agentic AI protocols for SEOs are Model Context Protocol, Agent2Agent, NLWeb, WebMCP, Agentic Commerce Protocol, Universal Commerce Protocol, and Agent Payments Protocol.

You do not need to implement all of them now. Most sites should first understand what each layer does, then decide which protocols fit their business model.

| Protocol | Full Name | Primary Role | SEO Relevance |

|---|---|---|---|

| MCP | Model Context Protocol | Connect agents to tools and data sources | Makes structured business data easier for AI systems to access |

| A2A | Agent2Agent Protocol | Enable agent-to-agent communication | Supports multi-agent workflows that may include brand or product checks |

| NLWeb | Natural Language Web | Add natural language endpoints to websites | Helps users and agents query site content directly |

| WebMCP | Web Model Context Protocol | Expose browser-native tools from web apps | Helps sites declare actions instead of relying on UI scraping |

| ACP | Agentic Commerce Protocol | Agent-led checkout between buyers, agents, and merchants | Makes products purchasable inside AI surfaces |

| UCP | Universal Commerce Protocol | Commerce interoperability across agents, businesses, and payment providers | Broadens merchant discovery, negotiation, checkout, and post-purchase flows |

| AP2 | Agent Payments Protocol | Payment authorization and mandates for agents | Helps establish user intent and payment accountability |

Treat the list as a stack, not a leaderboard. MCP is not “better” than A2A because they solve different problems. NLWeb is not the same thing as WebMCP because one focuses on natural language access to site content, while the other focuses on browser-exposed tools.

How Do Protocols Relate to Robots, Sitemaps, and Schema?

Agentic AI protocols do not replace robots.txt, XML sitemaps, internal links, feeds, Schema.org, or accessible HTML. They sit beside those foundations.

Search crawlers still need discovery paths. AI retrieval systems still need readable pages. Agents still need clear entities, predictable URLs, and trustworthy source material. A protocol can expose a tool or endpoint, but it does not solve unclear page architecture by itself.

Think of the classic SEO stack as the public evidence layer:

| SEO Asset | What It Gives Search Systems | What It Gives Agents |

|---|---|---|

| Robots.txt | Crawl permissions and disallow rules | A signal about what automated systems may access |

| XML sitemap | Canonical URL discovery | A map of important public resources |

| Internal links | Site structure and topical relationships | Context for related actions and decisions |

| Schema.org | Entity and content meaning | Structured facts for extraction and comparison |

| Product feeds | Catalog data for search and shopping | Inventory, price, and availability facts |

| APIs | Programmatic access to systems | Safer task execution when properly permissioned |

Protocols become more useful when these assets agree with each other. If a sitemap lists old URLs, structured data says one price, visible content says another, and a feed contains stale inventory, an agent has to decide which source to trust. Many systems will choose caution.

This is why protocol readiness should start with source-of-truth governance. Decide where each fact lives. Product prices might come from the commerce platform. Service descriptions might come from the CMS. Locations might come from a local listings platform. Reviews might come from a review provider. The website should not invent separate versions of those facts unless someone owns the update process.

For SEO teams, this creates a practical audit question: if an AI agent asked five systems the same question about your brand, would it get the same answer?

How Does MCP Affect SEO?

Model Context Protocol affects SEO by making structured data, tools, and business systems easier for AI applications to access through a standard interface.

Anthropic introduced MCP in November 2024 as an open standard for connecting AI assistants to external systems where data lives. The core idea is simple: instead of every AI app building a custom connector for every data source, developers can expose data or tools through MCP servers, and AI applications can connect as MCP clients.

For SEO, MCP matters because many ranking and recommendation decisions depend on information outside a single webpage. A SaaS company may have plan data in a billing system, documentation in a docs platform, customer proof in a CRM, and product updates in a changelog. An ecommerce business may have catalog, availability, shipping, and price data spread across several systems.

MCP can help agents access that information in a more controlled way than scraping public pages. That creates opportunities, but it also raises the bar for data governance.

SEOs should ask:

- Which facts should agents be allowed to access?

- Which facts must remain private or authenticated?

- Does the public website match the source systems?

- Are tool descriptions specific enough for an agent to choose the right action?

- Can sensitive actions be logged, permissioned, and reversed?

MCP is not an SEO tag. You do not add it to a page and magically become visible. It is closer to an integration layer. The SEO work is making sure your core facts, entities, documentation, and action paths are clean enough to expose.

This is where a technical SEO audit starts to overlap with agent readiness. Crawlability, structured data, canonical signals, API documentation, authentication boundaries, and server logs all become part of the same conversation.

How Is A2A Different From MCP?

Agent2Agent is different from MCP because A2A helps agents communicate with other agents, while MCP helps agents connect to tools and data sources.

Google announced the Agent2Agent Protocol in April 2025 to support interoperability between agents built by different vendors or frameworks. The protocol focuses on discovery, communication, task handoff, and collaboration without forcing an agent to reveal its internal memory, tools, or logic.

That distinction matters. A travel-planning agent may use MCP to check a hotel inventory system. It may use A2A to ask a separate loyalty-program agent to confirm points availability. A customer-support agent may use MCP to read a knowledge base, then use A2A to hand a billing issue to a finance agent.

For SEO, A2A is less about page markup and more about ecosystem visibility. If agents coordinate with each other, a brand may be evaluated across multiple specialist contexts:

| Agent Role | What It May Check | SEO Implication |

|---|---|---|

| Research agent | Category definitions, comparisons, third-party sources | Publish clear explainers and comparison content |

| Product agent | Pricing, specs, availability, policies | Keep product and service data current |

| Trust agent | Reviews, entity consistency, public profiles | Improve entity consistency |

| Commerce agent | Checkout capability, shipping, returns | Make transactional facts machine-readable |

| Support agent | Documentation, FAQs, troubleshooting | Maintain useful support content and structured answers |

A2A will matter most for businesses that participate in partner ecosystems, marketplaces, enterprise workflows, or complex customer journeys. A local service business may not need to implement A2A soon. But it still needs clean facts because other agents may reference it through search, maps, directories, or review platforms.

What Does NLWeb Do for Websites?

NLWeb helps websites offer a natural language interface over their own content and data.

Microsoft introduced NLWeb at Build 2025 as an open project for adding conversational interfaces to websites. Microsoft describes each NLWeb endpoint as also being an MCP server, which means a site can serve both humans asking natural language questions and agents looking for structured access.

That idea is important for publishers, ecommerce sites, directories, recipe sites, travel sites, service marketplaces, and documentation-heavy businesses. Many websites already contain the right information, but users and agents struggle to retrieve it because the site depends on navigation paths, filters, internal search, or JavaScript-heavy interfaces.

NLWeb points toward a more direct pattern:

- A user asks a natural language question on the site.

- The site answers from its own data.

- The response can use structured formats such as Schema.org JSON.

- AI agents can discover the same endpoint through MCP if the site allows it.

For SEO teams, NLWeb should trigger a content architecture question: does your site have enough structured, accurate, and queryable information to answer real questions?

If the answer is no, the protocol is not the first fix. Start with content modeling, Schema.org markup, clear taxonomy, canonical pages, and internal search quality. A conversational layer built on weak content usually produces weak answers.

NLWeb is especially relevant for sites that already use programmatic SEO or structured page templates. If your data model is clean, a natural language endpoint can reuse that structure. If your templates are thin, duplicated, or poorly governed, the endpoint may amplify the same problems.

What Is WebMCP and Why Is It Early?

WebMCP is an early browser API proposal that lets web applications expose JavaScript-based tools to AI agents through the browser.

The current WebMCP specification is a W3C Community Group draft, not a formal W3C standard. The draft describes a navigator.modelContext API that can let a web app register tools with names, descriptions, input schemas, and execution handlers. In plain English, the page can tell an agent what it can do instead of making the agent infer actions from the DOM.

That difference is meaningful. Today, browser agents often rely on brittle methods:

- Reading visible text.

- Interpreting screenshots.

- Guessing which button to click.

- Following CSS selectors that may change.

- Repeating human UI flows.

WebMCP moves toward explicit capabilities. A booking page might expose a checkAvailability tool. A dashboard might expose exportReport. A product page might expose compareVariants or addToCart.

For SEO, WebMCP is not a ranking factor. It is an action-readiness layer. It will matter most for web applications where users complete tasks: ecommerce, SaaS dashboards, booking engines, calculators, configurators, account portals, and support tools.

Because WebMCP is still early, most SEO teams should not rush into production implementation. They should prepare by documenting high-value actions, improving accessibility, cleaning visible labels, and making sure every critical action has clear user consent. If a human cannot understand the interface, an agent should not be trusted to operate it silently.

How Do ACP, UCP, and AP2 Change Commerce SEO?

ACP, UCP, and AP2 matter because they move agentic AI from product discovery into checkout and payment authorization.

The Agentic Commerce Protocol is maintained by OpenAI and Stripe. It defines an interaction model for connecting buyers, AI agents, businesses, and payment providers so purchases can happen through AI agents while merchants keep their commerce infrastructure.

Universal Commerce Protocol takes a broader commerce-interoperability approach. Its documentation describes building blocks for discovery, buying, and post-purchase experiences across platforms, agents, businesses, and payment providers. UCP also describes support for existing standards such as REST, JSON-RPC, AP2, A2A, and MCP.

Agent Payments Protocol, announced by Google, focuses on trusted agent-led payments. It introduces payment mandates that help prove what the user authorized, whether the user is present during checkout or has delegated a future purchase condition.

For ecommerce SEO, the practical shift is clear. Product pages may no longer be the only conversion surface. An AI assistant may compare products, present options, and complete checkout inside a chat or agent interface.

That does not mean product pages stop mattering. They become the source of truth that agents use to answer and transact. Weak product data becomes a conversion problem inside AI surfaces, not only a ranking problem inside search results.

Commerce teams should prioritize:

| Area | What to Clean Up | Why Agents Need It |

|---|---|---|

| Product data | Names, variants, prices, availability, specs | Prevents wrong recommendations |

| Policies | Shipping, returns, warranties, restrictions | Helps agents compare risk |

| Merchant identity | Legal name, brand name, support contacts | Supports trust and accountability |

| Reviews | Review count, sentiment, recurring objections | Helps agents explain tradeoffs |

| Checkout | Payment methods, fees, taxes, confirmation steps | Reduces abandoned agent transactions |

| Consent | User approval and scoped authorization | Protects the buyer and merchant |

Service businesses should pay attention too. The same pattern can apply to appointment booking, quote requests, lead qualification, consultation scheduling, and subscription signups.

What Should SEOs Audit First?

SEOs should audit the machine-readable truth layer before chasing protocol implementation.

The biggest mistake is treating protocols as a shortcut. They are not magic wrappers. They are access patterns. If your data is incomplete, outdated, contradictory, or blocked, an agent-friendly protocol may only give systems faster access to bad information.

Start with this sequence:

- Crawl the site like a search engine.

- Compare visible content with Schema.org markup.

- Check whether product, service, pricing, location, and policy facts are consistent.

- Review robots.txt, meta robots, canonical tags, and blocked resources.

- Inspect server logs for search bots, AI crawlers, and unusual automated behavior.

- Map key tasks a user might delegate to an agent.

- Identify which tasks should be informational, authenticated, or human-confirmed.

- Decide whether MCP, NLWeb, WebMCP, ACP, UCP, or AP2 fits those tasks.

This is a practical extension of technical SEO. The page must still be crawlable. The content must still be useful. Internal links must still connect related ideas. Structured data must still match the visible page.

The protocol layer only becomes valuable after those basics are strong.

Protocol Readiness Score

Start with crawlable facts before adding protocol-specific endpoints.

How Should SEO Teams Prepare Content?

SEO teams should prepare content for agentic protocols by making answers direct, facts explicit, and entities consistent across pages.

Agents need to extract information quickly. They struggle when important details are scattered across vague marketing sections, hidden in images, trapped behind interactions, or contradicted by old pages.

Use direct answers under headings. Add comparison tables where tradeoffs matter. Keep policies current. Add summaries to long documentation. Name products, services, people, locations, and brands consistently. Maintain clean author and organization signals.

This is also where answer engine visibility overlaps with protocol readiness. AI systems need passages they can summarize, sources they can cite, and facts they can compare.

Good agent-ready content often includes:

- A plain-language definition of the product, service, or concept.

- Use cases and exclusions.

- Pricing or pricing logic when possible.

- Requirements, limits, supported regions, and eligibility.

- Comparisons against alternatives.

- Proof points with dates, examples, and named sources.

- FAQ answers that match real buyer or user tasks.

- Structured data that mirrors visible content.

Avoid publishing “AI protocol pages” that say little more than “we support agentic AI.” Agents need operational facts. Humans do too.

How Should SEOs Handle Security and Trust?

SEOs should treat security and trust as part of agentic visibility, not as a separate engineering concern.

When agents can retrieve data or take action, the risk profile changes. A normal crawler reads pages. An agent may call a tool, submit a form, start checkout, export data, or trigger a workflow. That means every protocol project needs permission boundaries.

At minimum, teams should define:

| Risk Area | SEO-Relevant Question |

|---|---|

| Authentication | Which actions require a signed-in user? |

| Authorization | What can an agent do for each user role? |

| Consent | Which actions need explicit human confirmation? |

| Logging | Can we audit what the agent accessed or changed? |

| Data exposure | Are private facts excluded from public endpoints? |

| Prompt injection | Can page content manipulate agent behavior? |

| Source conflicts | What happens when external sources contradict our site? |

This is not fearmongering. It is basic operational hygiene. Protocols make access easier, so governance needs to become clearer.

For SEO teams, the trust layer also includes public evidence. If an agent checks your brand and finds inconsistent descriptions across profiles, old pricing pages in the index, or third-party reviews that contradict your claims, it may avoid a confident recommendation.

That is why brand entity visibility belongs in the protocol conversation. Agents need to know who you are, what you offer, where you operate, and whether other sources support those claims.

Which Businesses Should Move First?

Businesses with structured data, repeatable actions, and high-value delegated tasks should move first.

Not every website needs the same protocol roadmap. A small brochure site should not spend months implementing commerce protocols. An ecommerce marketplace, SaaS product, travel platform, healthcare directory, or local booking platform may have a stronger case.

| Business Type | First Practical Move |

|---|---|

| Ecommerce stores | Clean product data, policies, merchant identity, and checkout facts |

| SaaS companies | Improve documentation, pricing clarity, plan data, and integration pages |

| Local service businesses | Standardize NAP, services, service areas, reviews, and booking paths |

| Publishers | Improve topic hubs, structured content, authorship, and cited sources |

| Marketplaces | Normalize listings, availability, reviews, and seller policies |

| Agencies | Clarify services, proof, team expertise, case studies, and contact workflows |

The best first project is usually not “implement every protocol.” It is choosing one valuable delegated task and making the supporting data reliable.

For example, an SEO agency might start with a stronger service knowledge base and lead qualification workflow. An ecommerce store might start with product feeds, return policy clarity, and checkout capability mapping. A SaaS company might start with documentation endpoints and plan comparison data.

What Are the Common Mistakes?

The most common mistake is confusing protocol awareness with protocol readiness.

A team can know every acronym and still have a site that agents cannot use. The work remains operational, technical, and editorial.

Avoid these mistakes:

- Implementing protocols before cleaning core data.

- Treating AI agents as normal crawlers.

- Letting structured data drift away from visible content.

- Exposing actions without scoped permissions.

- Ignoring server logs and bot behavior.

- Assuming one protocol will dominate every use case.

- Forgetting that third-party sources may shape agent recommendations.

- Publishing generic AI content without useful examples or sources.

The stronger approach is incremental. Audit the facts. Fix the content model. Strengthen structured data. Improve internal links. Map delegated tasks. Then choose the protocol layer that fits.

How Do Agentic Protocols Fit Into SEO Strategy?

Agentic protocols fit into SEO strategy as an infrastructure layer for AI-mediated discovery, evaluation, and action.

Traditional SEO still gets pages discovered. AI SEO helps pages and brands survive summarization, citation, and recommendation. Agentic protocol readiness helps AI systems act on reliable information when the user wants more than an answer.

That creates a three-part roadmap:

| Stage | SEO Focus | Protocol Readiness Focus |

|---|---|---|

| Discovery | Crawling, indexing, rankings, internal links | Make important resources accessible |

| Evaluation | Content quality, citations, entities, proof | Make claims structured and verifiable |

| Action | Conversion paths, UX, forms, checkout | Make tasks safe, callable, and consent-aware |

This is why agentic protocols should not sit only with developers. SEO, product, engineering, legal, analytics, and security all touch the outcome.

The SEO team brings the map of user intent, search demand, content gaps, SERP competitors, answer-engine sources, and conversion journeys. Engineering brings implementation. Security defines boundaries. Product chooses which actions deserve agent access. Analytics measures whether the work changes visibility, referrals, assisted conversions, or lead quality.

What Should You Do in the Next 90 Days?

In the next 90 days, most SEO teams should prepare for agentic AI protocols by improving the information layer, not by chasing every new spec.

Use this roadmap:

| Timeline | Action | Output |

|---|---|---|

| Weeks 1-2 | Audit crawlability, structured data, and blocked resources | Technical readiness list |

| Weeks 3-4 | Map the top delegated tasks users may ask agents to complete | Agent task inventory |

| Weeks 5-6 | Clean product, service, pricing, policy, and entity facts | Source-of-truth updates |

| Weeks 7-8 | Review third-party evidence and answer-engine mentions | Trust gap list |

| Weeks 9-10 | Choose one pilot layer, such as MCP, NLWeb, or commerce readiness | Pilot brief |

| Weeks 11-12 | Define permissions, logging, and measurement | Launch and risk checklist |

Measure progress with practical signals. Track whether AI systems mention the brand accurately, whether cited sources improve, whether product and service facts stay consistent, whether bot behavior changes, and whether assisted conversions from AI surfaces become visible.

Do not wait for every protocol to stabilize before fixing the basics. Crawlable content, clean structured data, consistent entities, and trusted evidence already matter today. Agentic protocols simply make the cost of messy information more obvious.

How Do You Measure Protocol Readiness?

Measure protocol readiness with operational signals, not only rankings.

Rankings still matter because agents and answer engines often retrieve from the open web. But a protocol-ready site also needs to prove that machines can access facts, understand context, and complete safe actions without creating risk.

Use a mixed scorecard:

| Metric | What to Track | Why It Matters |

|---|---|---|

| Fact consistency | Differences between visible pages, schema, feeds, and third-party profiles | Agents penalize uncertainty when sources conflict |

| Retrieval quality | Which pages AI systems cite or summarize for priority prompts | Shows whether your content enters the answer layer |

| Task completion | Whether an agent can find pricing, compare options, or submit a test workflow | Measures action readiness, not just visibility |

| Bot access | AI crawler activity, blocked resources, server errors, and rate-limit patterns | Reveals whether automated systems can reach useful assets |

| Consent coverage | Actions with explicit confirmation and scoped permissions | Reduces risk in agent-led workflows |

| Source freshness | Age of product, policy, documentation, and profile updates | Prevents agents from using stale evidence |

This measurement should live close to existing SEO reporting. Add a section to monthly reports for AI answer visibility, protocol readiness, and agent task tests. Record the platform, prompt, date, cited URLs, mentioned brands, and whether the answer matches the intended facts.

For ecommerce, add test tasks such as “find a product under a price limit,” “compare return policies,” and “check whether this item ships to a target location.” For SaaS, test “compare plans,” “find integration limits,” and “summarize migration support.” For service businesses, test “identify service areas,” “find proof of expertise,” and “book or request a consultation.”

Do not overfit to one AI platform. Test across the systems your audience uses, then compare patterns. If multiple tools fail on the same fact, the problem is probably your evidence layer. If one tool fails and others succeed, the issue may be platform-specific retrieval or freshness.

References and Resources

Sources used

- Anthropic: Introducing the Model Context Protocol

- Model Context Protocol specification

- Google Developers Blog: Announcing the Agent2Agent Protocol

- Google A2A GitHub repository

- Microsoft: Introducing NLWeb

- Microsoft NLWeb GitHub repository

- WebMCP Draft Community Group Report

- Agentic Commerce Protocol GitHub repository

- Stripe: ACP and Instant Checkout in ChatGPT

- Universal Commerce Protocol documentation

- Google Cloud: Agent Payments Protocol